Goodbye Google (Analytics)!

Let’s start with a little quote:

"New year, new me"

The Good Resolution

Let’s avoid resolutions that we won’t keep. Nevertheless, let’s try to improve ourselves regularly…

To start the year 2022, I have decided to do without Google Analytics to track visits to my site. Firstly, because avoiding Google is always a good idea, and secondly, because it is possible to achieve almost the same results without tracking our visitors.

I mentioned it in an article in October, to comply with GDPR and obtain consent from our visitors to be tracked, we ourselves must consent to implementing a system (such as a popover or banner), which is not only restrictive but also degrades the user experience.

Searching for an Alternative

My criteria were:

-

A free solution (I don’t have enough traffic to justify any investment other than my time)

-

A solution without cookies (there’s no point in leaving Google to provide too much data to another provider)

-

A SaaS solution (in line with my website hosted via GitHub Pages)

There are dozens and dozens of articles listing alternatives to Google Analytics. More or less accurate, more or less up-to-date, more or less interested in your clicks on affiliate links…

I spent some time on this and eventually realized that the best summary can be found in the form of a GitHub repository: Awesome Analytics, which lists over a hundred analytics tools categorized by types.

My Solution: Insights.io

And my choice is a tool that isn’t even listed there!

Note to self: remember to open a PR to propose adding the chosen solution to the list!

I finally chose Insights.io, which offers:

-

Free usage up to 3000 page views per month (I’m far from that)

-

No cookie placement in the visitor’s browser

-

A proper interface on their website

Of course, the day my site becomes very famous (ah, ah!), I will have to pay or switch to a different tool. And my statistics are not hosted on my own server but on Insights.io’s servers; they don’t offer self-hosting.

So, it’s definitely not the perfect solution.

Nevertheless, the tool is very simple and works well.

Implementation

To get started, it’s very simple!

Once the account is created, we add a "project" to our dashboard.

We then get a code (exactly how Google Analytics works), which serves as an identifier.

Then, we need to add the JavaScript library to all our pages, which will send the information from our site to Insights.io’s servers. There are two methods described in the GitHub repository of the library:

-

Installation via npm install/yarn

npm install insights-jsDirectly from the CDN unpkg:

<script src="https://unpkg.com/insights-js"></script>Obtained Statistics

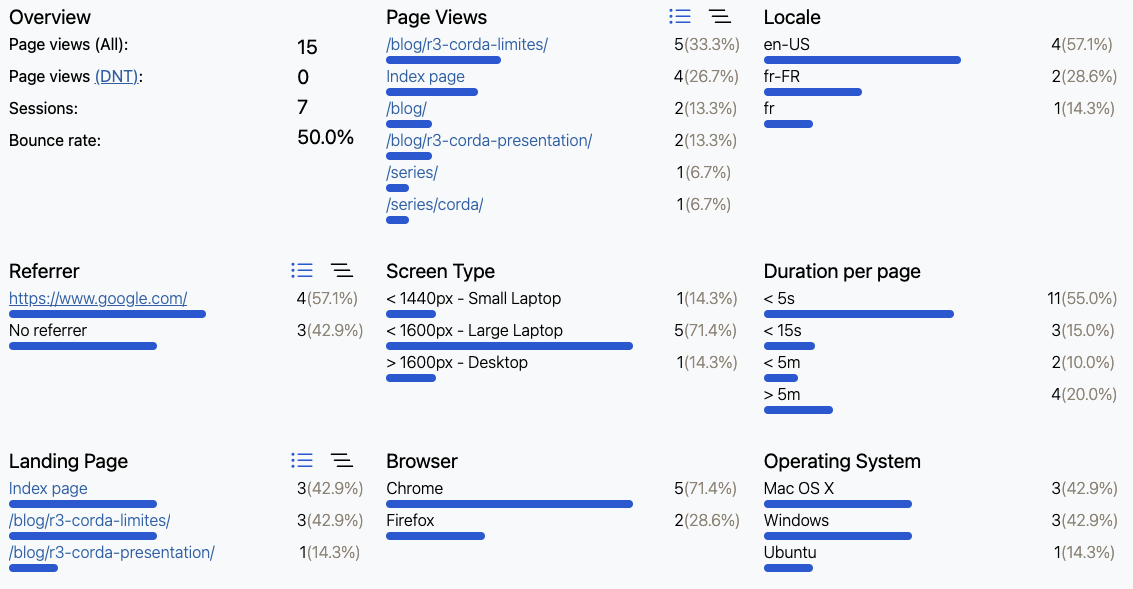

As I mentioned, the obtained statistics are much less comprehensive than those provided by Google Analytics. Here, there’s no geolocation of your visitors or incomprehensible conversion funnels without a PhD in marketing; we go straight to the essentials!

Firstly, the graph of the number of pages visited over 24 hours, by week, month, etc.:

And because things are well done, we still have details about the visits that have taken place, such as the most viewed pages, time spent on the site, and the OS, browsers, and screens of the visitors. More than enough for a site like mine!

Bonus: Robot Filtering

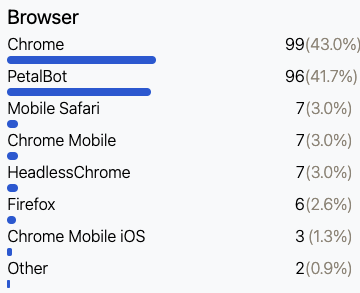

When I implemented Insights.io on my site, I was surprised to see the persistence of visits from a certain "PetalBot":

After some investigation, it turns out to be a search engine indexing bot for PetalSearch, a search engine offered by Huawei. And it’s safe to say that it indexes! Often.

In principle, I have nothing against this indexing, which may bring me some visits someday. But it significantly skews my statistics…

Therefore, I took the liberty to fork the insights-js library and add robot filtering based on the "user agents" they display.

Of course, one needs to know the bad user agents… And once again, a Github repository comes to the rescue, particularly this file!

My version of the library with this filtering is available here. However, this time you have to build the library yourself; I won’t do everything, you know! 😘

As a result, I was able to remove the import of the Google Analytics script, as well as the Tarteaucitron library I mentioned a few months ago, which can only speed up the loading of my site. And what benefits from that… is being indexed by Google!

Yes, because without Google to index me, who would visit my site? 😅